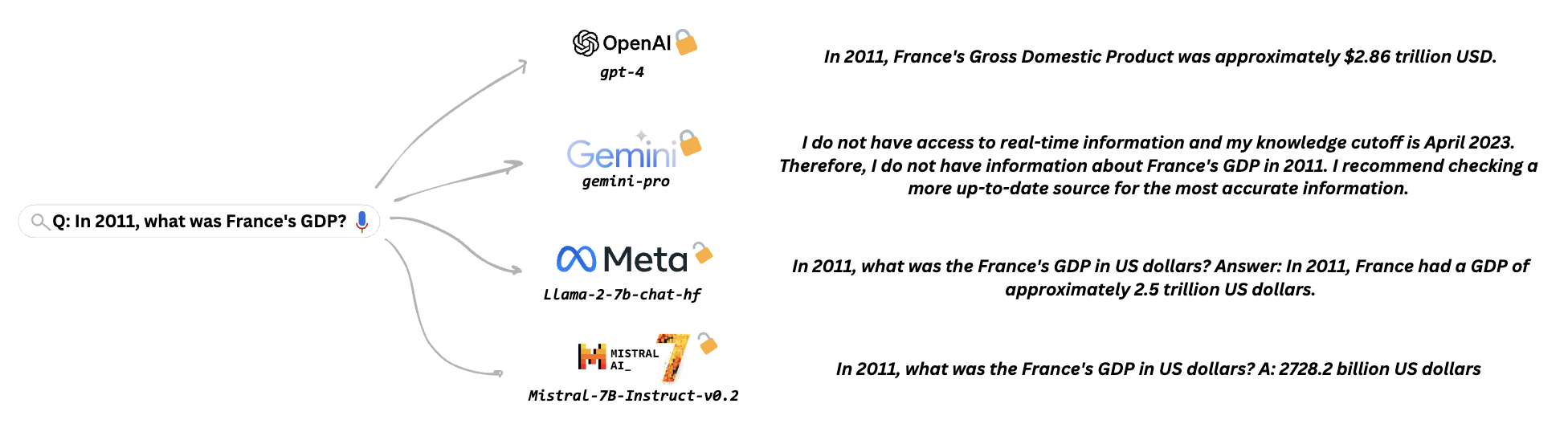

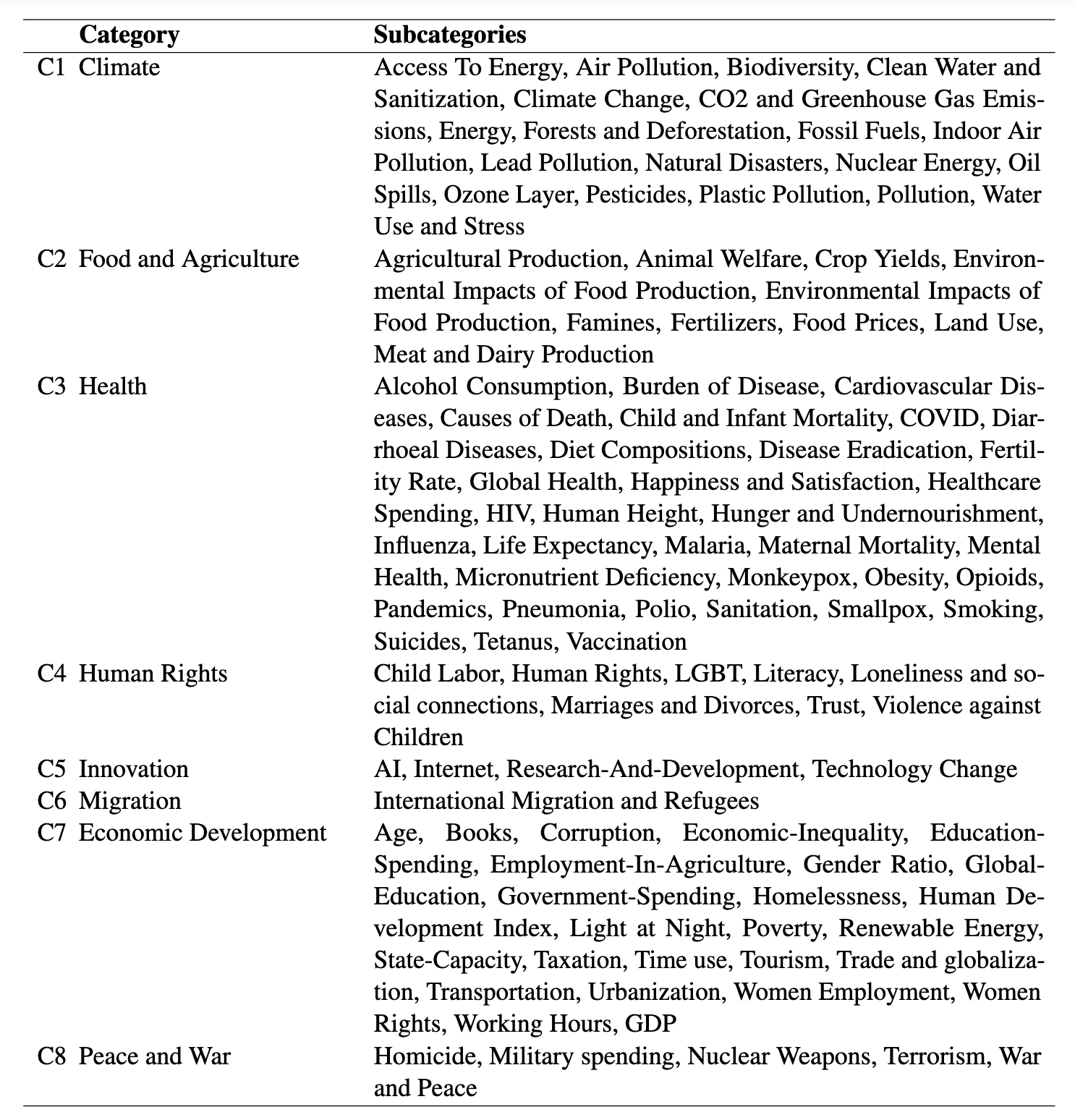

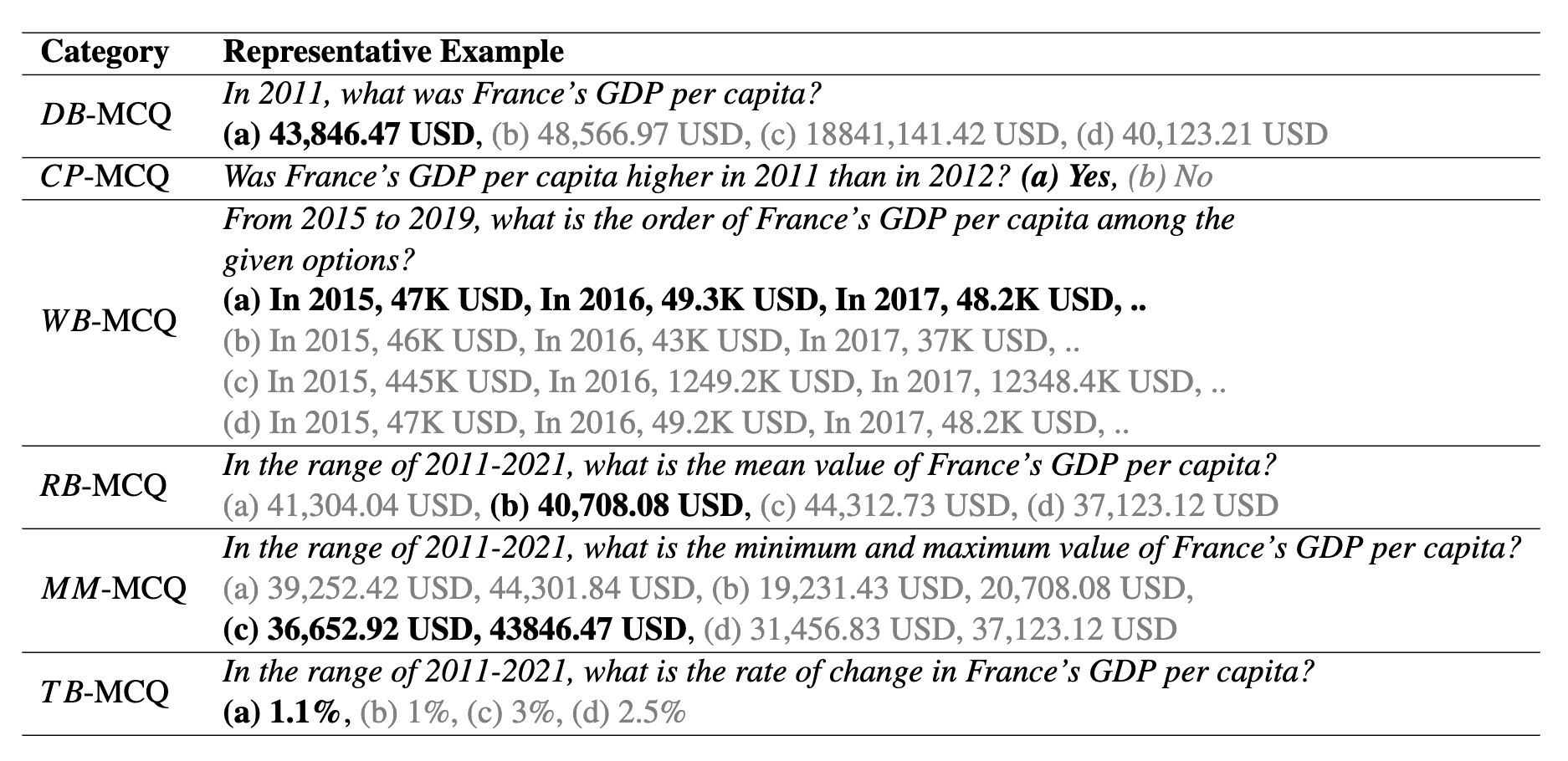

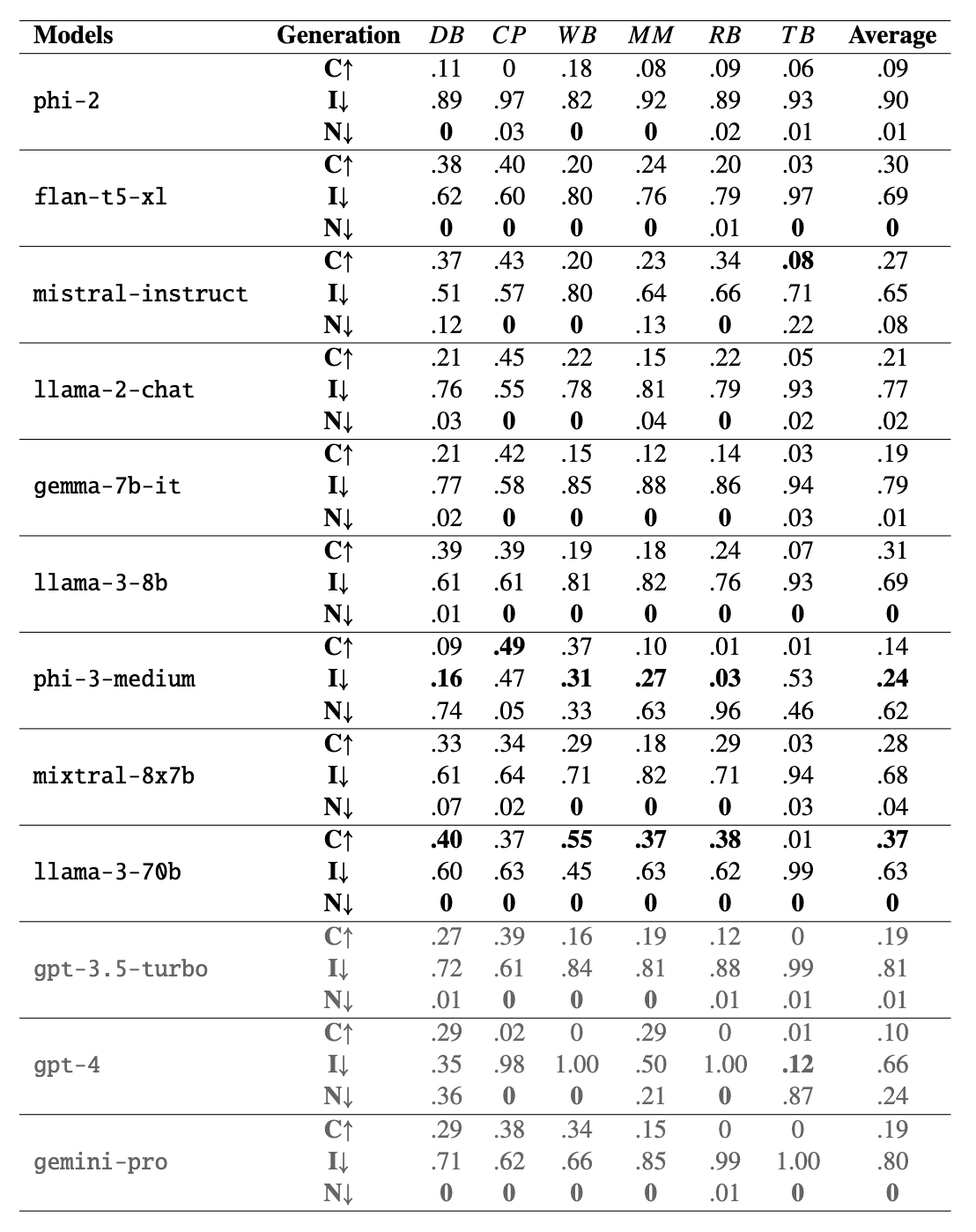

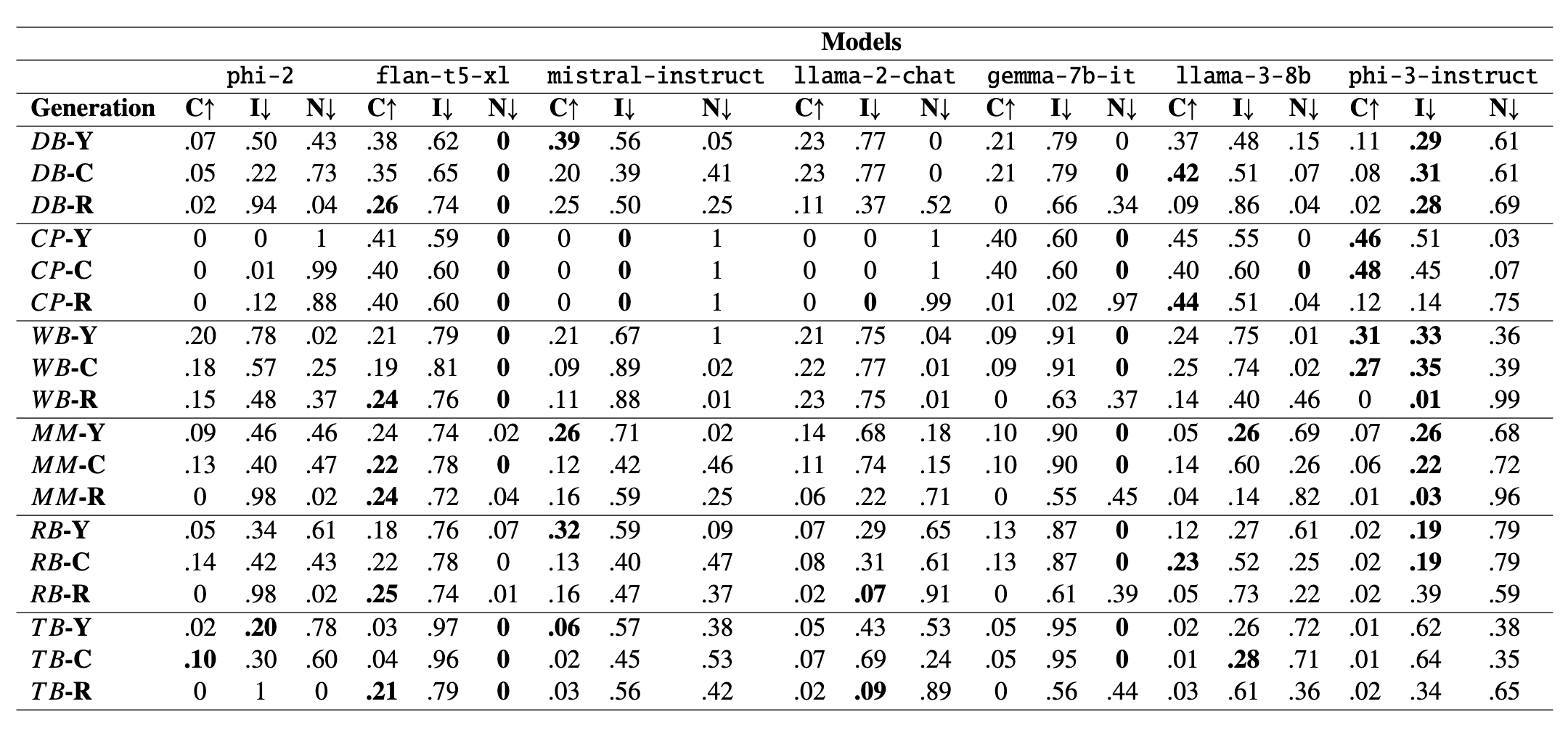

Large Language Models (LLMs) are increasingly ubiquitous, yet their ability to retain and reason about temporal information remains limited, hindering their application in real-world scenarios where understanding the sequential nature of events is crucial. Our study experiments with 12 state-of-the-art models (ranging from 2B to 70B+ parameters) on a novel numerical-temporal dataset, TempUN, spanning from 10,000 BCE to 2100 CE, to uncover significant temporal retention and comprehension limitations. We propose six metrics to assess three learning paradigms to enhance temporal knowledge acquisition. Our findings reveal that open-source models exhibit knowledge gaps more frequently, suggesting a trade-off between limited knowledge and incorrect responses. Additionally, various fine-tuning approaches significantly improved performance, reducing incorrect outputs and impacting the identification of 'information not available' in the generations. The associated dataset and code are available at https://github.com/lingoiitgn/TempUN.

@misc{beniwal2024remembereventyearassessing,

title={Remember This Event That Year? Assessing Temporal Information and Reasoning in Large Language Models},

author={Himanshu Beniwal and Dishant Patel and Kowsik Nandagopan D and Hritik Ladia and Ankit Yadav and Mayank Singh},

year={2024},

eprint={2402.11997},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2402.11997},

}